Workplace

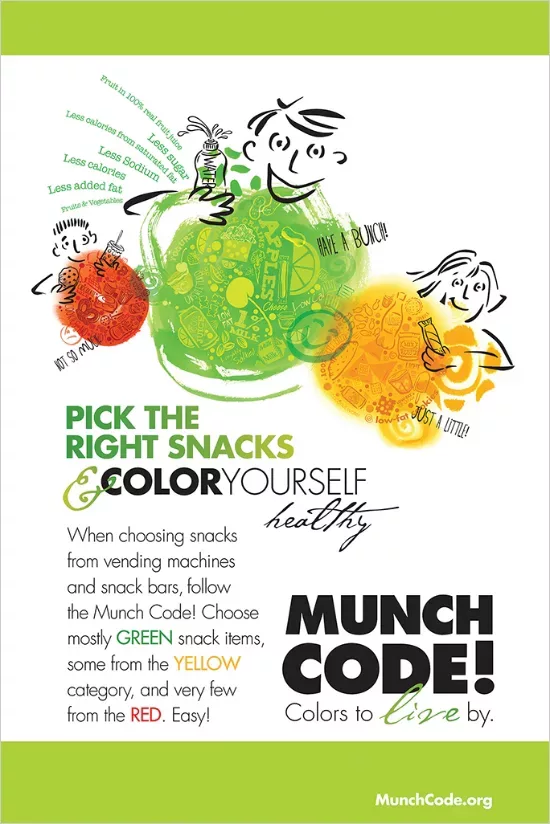

The workplace provides many opportunities for promoting health and emotional well-being. Employers can inspire positive change with workplace wellness programs and policies designed to reduce health risks that contribute to chronic disease. Even small steps can improve quality of life and support healthy behaviors. Check out our featured events, tools, and programs to enhance your worksite wellness program.

Workwell Events

Don’t miss an opportunity to connect with other South Dakota employers, HR professionals, health benefits managers, and staff with a passion for improving worksite wellness.

Workplace Wellness Toolkit

Improve your company’s bottom line by promoting healthy lifestyles. Learn how to decrease healthcare costs, and retain key staff with our FREE toolkit.

The workplace is a great place to promote healthy lifestyles. Use the filters below to find inspiration, tips, recipes, and ideas on how to build a sustainable wellness program and inspire healthy habits!